Changing machines in the Victorian Press

This project explored semantic change in the vocabulary of mechanization in nineteenth-century British newspapers using diachronic word embeddings and change-point detection techniques.

-

Questions

Industrial mechanization began in eighteenth-century Great Britain and accelerated dramatically in the nineteenth century. English, unsurprisingly, reflects these transformations at several linguistic levels, particularly in the lexicon, given the unprecedented pace of technological innovation.

- Given sufficiently large datasets, is it possible to automatically detect semantic change in words associated with mechanization?

- Do the changes detected align with findings in traditional scholarship (e.g. Görlach 1999, Kay & Allan 2015, Kytö et al. 2006)?

-

Challenge

Large-scale historical text data presents different challenges from those of contemporary datasets. It is often messy, marked by orthographic variation, and OCR-derived noise. This can make it difficult to train high-quality language models directly on the raw material.

At the same time, the scale of the corpus limits how much preprocessing can realistically be applied. Tasks such as lemmatization, PoS tagging, morphological analysis, or dependency parsing may become computationally impractical unless the corpus is heavily subsampled. In practice, even minimal preprocessing often requires combining pipelines from multiple libraries together with bespoke methods rather than relying on a single NLP toolkit.

-

Data

The dataset consisted of a digitized nineteenth-century newspaper collection comprising approximately 4.5 billion tokens, spanning the period 1800-1920. Around half of the material comes from the Heritage Made Digital digitization project and can be downloaded here. The other half was digitized as part of the Living with Machines project and can be downloaded here. Also see Westerling et al. (2025) for more details about the metadata available on these collections.

-

(Pre-)processing

The corpus was divided into bins of ten years each in order to obtain models representing individual decades of the nineteenth century. Each bin was preprocessed by lowercasing the text, removing punctuation, and filtering out tokens shorter than two characters.

-

Training

The embeddings were trained using Word2Vec (Mikolov et al. 2013) as implemented in Gensim (Řehůřek & Sojka 2010). After a grid search over the most important parameters, the final models were trained with the following configuration:

- sg: 1 (i.e. Skip-Gram rather than Continuous Bag-of-Words)

- epochs: 5

- vector_size: 200

- window: 3

- min_count: 1

-

Alignment

In order for the semantic spaces to be comparable, the models were aligned using Orthogonal Procrustes (Schönemann 1966), resulting in diachronic embeddings. The most recent decade (1910s) was kept fixed, with all earlier decades aligned to it.

-

Change point detection

Given a word (or set of words), we first calculate the cosine similarity between the vector representing that word in the most recent time slice (here the 1910s) and the corresponding vector in each earlier decade. The resulting similarity scores can be structured in a dataframe like the following:

timeslice traffic train coach wheel fellow railway match 1800s 0.42065081000328100 0.3247864842414860 0.48939988017082200 0.40280336141586300 0.4793526530265810 0.45957744121551500 0.5546759963035580 1810s 0.44998985528945900 0.37257763743400600 0.4604988396167760 0.4593697786331180 0.5891557335853580 0.47077488899231000 0.527800440788269 1820s 0.4821169972419740 0.3287739157676700 0.4415084719657900 0.5199856162071230 0.5828660130500790 0.414533793926239 0.5771894454956060 1830s 0.5448930859565740 0.5837113261222840 0.6515539884567260 0.634568989276886 0.6111024618148800 0.5849509239196780 0.5492534637451170 1840s 0.6072453856468200 0.7486659288406370 0.6239444613456730 0.6725128889083860 0.623650848865509 0.5770826935768130 0.5812575817108150 1850s 0.6091518402099610 0.7796339988708500 0.5971360206604000 0.4985277056694030 0.6289939284324650 0.6734532713890080 0.5770257711410520 1860s 0.6446579694747930 0.7618392705917360 0.603736162185669 0.5436286926269530 0.6926408410072330 0.609641432762146 0.6728477478027340 1870s 0.7729555368423460 0.8115466833114620 0.6379809379577640 0.652446448802948 0.7293833494186400 0.7228521108627320 0.6857554316520690 1880s 0.7656214237213140 0.8313593864440920 0.6118263006210330 0.5638831257820130 0.7863538861274720 0.7955769300460820 0.757251501083374 1890s 0.8245968818664550 0.8599095344543460 0.6886460185050960 0.7133383750915530 0.8441230058670040 0.8329502940177920 0.8200661540031430 1900s 0.8344136476516720 0.854633092880249 0.7280615568161010 0.7398968935012820 0.8377308249473570 0.8479636907577520 0.9051868915557860 1910s 1.0 1.0 1.0 1.0 1.0 1.0 1.0 As expected, the cosine similarity between a word vector and itself is 1, which is why the final row contains only 1s. We can then detect potentially significant change points in each column, that is, abrupt shifts in a word's semantic trajectory.

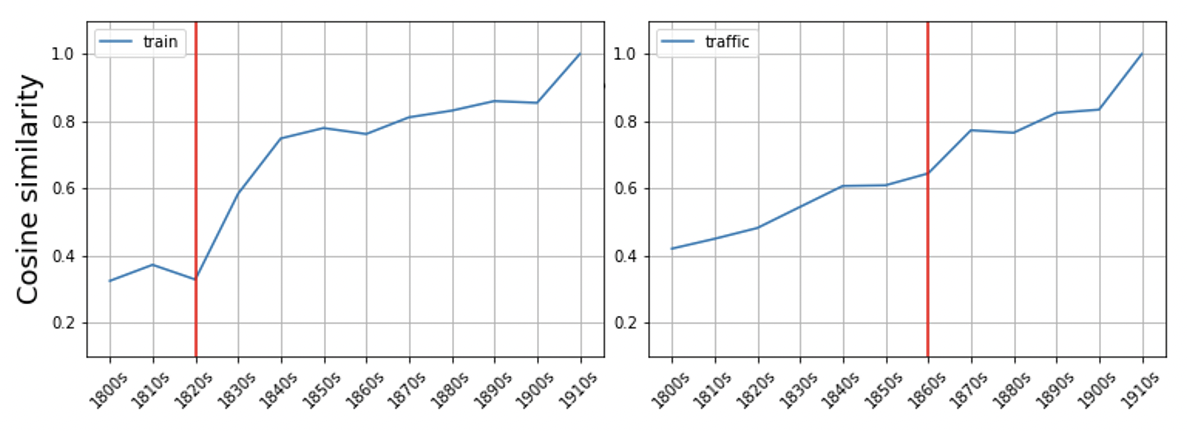

For this task we apply the PELT algorithm, as implemented in the ruptures library. In this example, change points are detected for train in the 1820s and traffic in the 1860s. The detected change points can then be visualized (the vertical line marks the detected shift):

-

Semantic trajectory visualization

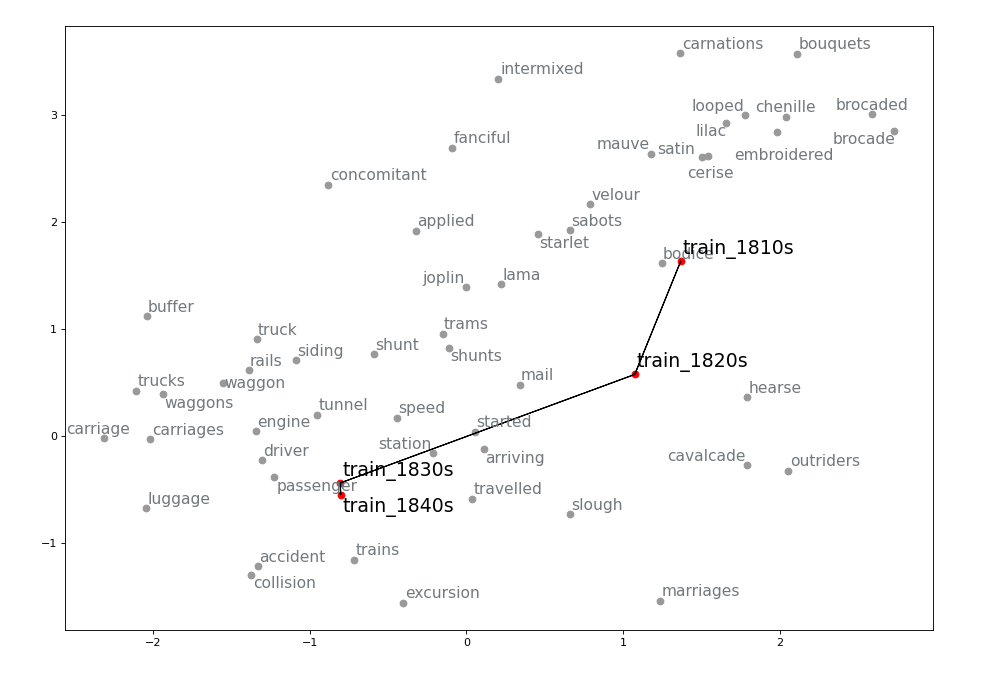

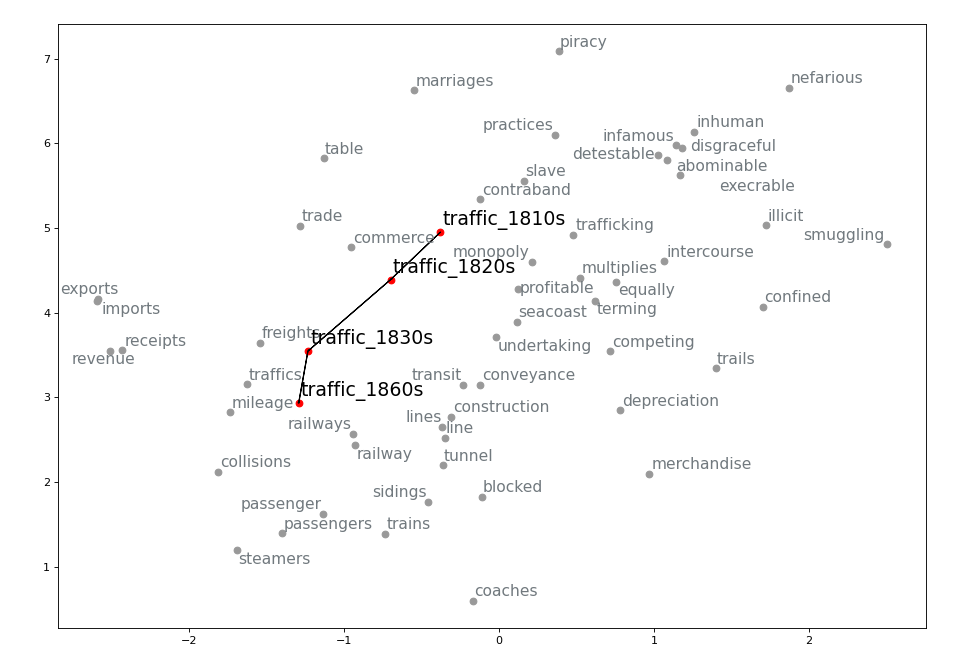

Building on the change point detection results, we can extract the k-nearest neighbours of a word for the decades surrounding a detected shift and visualize its semantic trajectory. For example, we can extract 30 neighbours across several decades for train and traffic.

Only neighbours present in the vocabulary of the most recent model included in the visualization are retained. Before visualization, vectors corresponding to OCR errors are filtered out or merged with the correctly spelled form (using the pyspellchecker package). The vectors of the target word itself are also included for each decade. Dimensionality reduction with t-SNE is then applied to visualize the vectors in two dimensions.

-

Evaluation

The results from change point detection were compared with semantic shifts discussed in the traditional literature. The comparison suggests that the diachronic models capture many of the changes identified by earlier scholarship. This indicates that models of this type can be used not only to confirm existing observations but also to explore research questions that have not yet been addressed in the literature.

-

Credits

This project was carried out within the Living with Machines project at The Alan Turing Institute.

See Pedrazzini & McGillivray (2022) for the full case study and Pedrazzini & McGillivray (2022), Pedrazzini (2023), and Pedrazzini & McGillivray (2023) for the released embeddings.

-

References

M. Görlach. 1999. English in Nineteenth-Century England: An Introduction. Cambridge: Cambridge University Press.

C. Kay & K. Allan. 2015. English Historical Semantics. Edinburgh: Edinburgh University Press.

M. Kytö, M. Rydén & E. Smitterberg. 2006. Nineteenth-Century English: Stability and Change. Cambridge: Cambridge University Press.

T. Mikolov, K. Chen, G. Corrado & J. Dean. 2013. Efficient estimation of word representations in vector space.

R. Řehůřek & P. Sojka. 2010. Software framework for topic modelling with large corpora. In Proceedings of the LREC Workshop on New Challenges for NLP Frameworks, 45–50, Valletta, Malta.

P. H. Schönemann. 1966. A generalized solution of the orthogonal Procrustes problem. Psychometrika 31, 1–10.

R. Al-Ghezi & M. Kurimo. 2020. Graph-based syntactic word embeddings. In Proceedings of TextGraphs.

O. Levy & Y. Goldberg. 2014. Dependency-based word embeddings. In Proceedings of the 52nd Annual Meeting of the Association for Computational Linguistics, pages 302–308.

N. Pedrazzini & B. McGillivray. 2022. Machines in the media: semantic change in the lexicon of mechanization in 19th-century British newspapers. In Proceedings of the 2nd International Workshop on Natural Language Processing for Digital Humanities, pages 85–95. Taipei, Taiwan: Association for Computational Linguistics.

B. McGillivray, N. Pedrazzini, A. Ciula, J. Lawrence, T. H. Ong, M. Ridge & J. Monteiro Vieira. 2025. Analysing the language of mechanisation in nineteenth-century British newspapers. In R. Ahnert, E. Griffin & J. Lawrence (eds.), Living with Machines: Computational Histories of the Age of Industry. London: University of London Press.

N. Pedrazzini & B. McGillivray. 2022. Diachronic word embeddings from 19th-century newspapers digitised by the British Library (1800–1919) [Dataset]. Zenodo.

N. Pedrazzini. 2023. Decade-level Word2Vec models from automatically transcribed 19th-century newspapers digitised by the British Library (1800–1919) [Dataset]. Zenodo.

N. Pedrazzini & B. McGillivray. 2023. Diachronic and diatopic word embeddings from newspapers digitised by the British Library (1830–1889): North and South England [Dataset]. Zenodo.

K. Westerling, K. Beelen, T. Hobson, K. McDonough, N. Pedrazzini, D. C. S. Wilson & R. Ahnert. 2025. LwMDB: Open metadata for digitised historical newspapers from British Library collections. Journal of Open Humanities Data 11, Article 32.

T. Hobson, K. Westerling, K. McDonough, D. C. S. Wilson, N. Pedrazzini & K. Beelen. 2024. LwMDB simplified exports [Dataset]. Zenodo.

Check out other projects